- Offer Profile

- Computer Vision & Robotics Group

Research Interests

- 3D models from uncalibrated images,

- Object recognition.

- Human-computer interfaces.

- Visual tracking and localisation.

- Visually guided robotics and autonomous systems.

- Augmented reality.

Curves and Surfaces

The reconstruction of surfaces from apparent contours

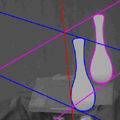

- In this project we aim to recover the shape of arbitrary surfaces from the apparent contours (or outlines) visible from arbitrary views. A key contribution is the introduction of the epipolar parametrization which exploits the epipolar geometry (geometry of viewpoints) to induce a spatio-temporal parametrization of the image curves and surfaces. This generalizes the epipolar geometry of points to curves and surfaces, and allows the recovery of shape under perspective projection and arbitrary camera motion.

The analysis of the degeneracies of the epipolar parametrization

- Singular apparent contours or cusps occur at isolated points, seen as an abrupt contour ending in the outline of an opaque surface. The epipolar parametrization cannot be used to recover surface geometry at these points. In this project, the locus of cusps under viewer motion is exploited to recover the geometry in the vicinity of the cusps. The other case of degeneracy is used to develop an algorithm to recover viewer motion.

Recovery of camera motion from outlines

- It is generally believed that the outlines of a curved surface cannot be used to recover the motion since they are projections of curves which slip over the surface under viewer motion. In this project, the envelope of consecutive contour generators is shown to define special (frontier) points and these points are used to recover the epipolar geometry from image curves.

Circular motion

- In this project, a particularly simple and elegant solution is found for a special type of motion in which an object is placed on a turntable which is rotated in front of a stationary camera. A novel solution is introduced which exploits the symmetry in the envelope of outlines swept out by the rotating surface. This technique uses a single curve tracked over the image sequence and has been successfully used to recover the shape of an arbitrary object from an uncalibrated camera.

Quasi-invariant parametrizations and matching of curves

- In this project we aim to develop a robust algorithm for curves mathcing. B-splines can be fitted automatically to image edge data and used to group fragments of curves which are projections of bilateral symmetry in the scene. Quasi-invariant parametrizations of image curves are developed to help in the matching of curves. These reduce the order of derivatives required to compute the geometric invariants of curves from fifth to second-order making these less sensitive to image noise and occlusion.

Visually Guided Robots

Visual servoing

- This project takes advantage of the geometric structure of the Lie algebra of the affine transformation. A novel approach for visual servoing exploits a single robot motion to image deformation Jacobian, computed once near the target location, to guide the robot over a large range of perturbations. This framework has been extended recently to produce a robust 3D model tracking system which is able to track articulated objects in the presence of occlusion live from video images.

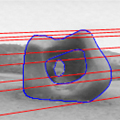

2½D Visual Servoing from Planar Contours

- The aim of this research is to design a complete system for segmenting, matching and tracking planar contours for use in visual servoing. Our system can be used with arbitrary contours of any shape and without any prior knowledge of their models. The system is first shown the target view. A selected contour is automatically extracted and its image shape is stored. The robot and object are then moved and the system automatically identifies the target. The matching step is done together with the estimation of the homography matrix between the two views of the contour. Then, a 2½D visual servoing technique is used to reposition the end-effector of a robot at the target position relative to the planar contour. The system has been successfully tested on several contours with very complex shapes such as leaves, keys and the coastal outlines of islands.

Image Divergence from Closed Curves

- Visual motion, as perceived by a camera mounted on a robot moving relative to a scene, can be used to aid in navigation. Simple cues such as time to contact can in principle be estimated from the divergence of the image velocity field. In practice methods using spatio-temporal derivatives of image velocity were too sensitive to image noise to be useful. This project considers the temporal evolution of the apparent area of a closed contour (and an extension of Green's theorem in the plane) and aims to recover time to contact and surface orientation reliably. This is exploited in real-time visual docking and obstacle avoidance.

Uncalibrated Stereo Hand-Eye Coordination

-

In this project, a simple and

robust approximation to stereo using only the cues available under

orthographic projection is used to build a system which exploits relative

disparity (and its gradient) in uncalibrated stereo to guide a robot

manipulator to pick up unfamiliar objects in an unstructured scene. The

system must not only be able to cope with uncertainty in shape of the

object, but also with uncertainty in the postions and orientations of the

camera, the robot and the object.

Man-Machine Interfaces Using Visual Gestures, Pointing

- By detecting and tracking a human hand the system is extended so that the user can point at an object of interest and guide the robotic manipulator to pick it up. The project uses uncalibrated stereo vision and visual tracking of the hand. This makes the system robust to movement of the cameras and of the user. This is just one example of novel man-machine interfaces using computer vision to provide more natural ways of interacting with computers and machines. Some of the earliest examples in this field include a wireless, passive alternative to a 3D mouse which exploits motion parallax cues and an algorithm to detect and track face gaze which exploits symmetry.

Visual Tracking

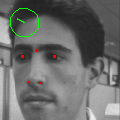

The Temporal Consensus Tracker

-

The temporal consensus tracker

uses a minimal subset of the data to provide the pose estimate, and a robust

regression scheme to select the best subset. Bayesian inference in the

regression stage combines measurements taken in one frame with predictions

from previous frames, eliminating the need to further filter the pose

estimates. The resulting tracker performs very well on the difficult task of

tracking a human face, even when the face is partially occluded. Since the

tracker is tolerant of noise, computationally cheap feature detectors,

frame-rate operation is comfortably achieved on standard hardware.

The MPEG video below shows the temporal consensus algorithm tracking a human face. The orientation of the face is illustrated as a drawing pin in the top left hand corner of each frame.

Automatic Human Face Detection and Localisation

- This project aims to achieve automatic detection and localization of human faces in scenes with no prior information about scale, orientation or viewpoint.

3D Models from Images

PhotoBuilder

- Aiming to build realistic 3D models from photographs of architectural scenes, the PhotoBuilder application reconstructs models from photographs taken from arbitrary viewpoints.

Photorealistic Models from uncalibrated photos

- Using a simple interactive algorithm to generate the initial model and then refining this automatically, the project aims to combine the best parts of automatic and interactive 3D model creation.

3D Television

- The purpose of this project is to display photo-realistic three-dimensional images using off the shelf cameras, and with minimal camera calibration.

Image Segmentation and Grouping

Motion Segmentation for Video Indexing

-

A single video contains a vast

amount of information; the aim of video indexing is to automatically analyse

a video to extract a small amount of characteristic information.

Investigating the extraction of information from the motion in a scene,

techniques for segmentation and mosaicing are used to distil a description

of the scene that can be searched for.

Image Segmentation

-

The "creep and merge"

segmentation system aims to solve as many as possible of the reported

difficulties with segmentation systems, and produce a single, parameter-free

software package implementing the results.

Human Face Detection

- This project aims to achieve automatic detection and localization of human faces in scenes with no prior information about scale, orientation or viewpoint.

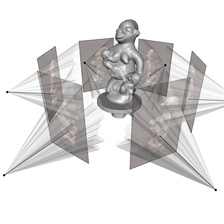

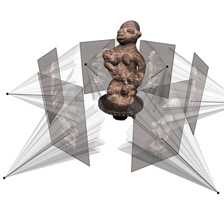

Digital Pygmalion project: from photographs to 3D computer model

- Professor Roberto Cipolla's Digital Pygmalion project

brings a handful of photographs of a sculpture to life as a high-resolution

3D computer model. Together with Dr Carlos Hernández Esteban, they have

produced breathtaking results, which will guide Antony Gormley in scaling up

his sculpture from life-size to be over 25 metres high.

"Roberto's work is unique in the world: it's extraordinary to get a fully rotational model from a standard single-lens digital camera." Antony Gormley.

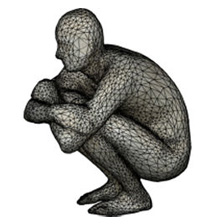

Roberto and Carlos visited the artist recently to take photographs of the sculpture and then used their world-leading computer vision techniques to construct a complete 3D model of the piece. The results are not only technically impressive but are also visually stunning.

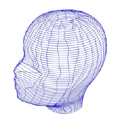

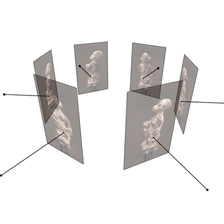

The picture of the sculpture below gives an idea of the underlying mathematical mesh. The software allows the user to look at the structure from any view point. The original texture of the sculpture can be overlaid on this skin. Lighting effects can be added. A full resolution image on a good screen looks perfect.High resolution colour photos of the object in natural light are taken with a standard off-the-shelf camera. The silhouettes and the main interest points on the object are detected automatically in each of the different photos that have been taken. The position of the camera when each photo was taken can then be calculated.

The silhouette and texture in each photo is then used to guide the "digital sculptor" to carve out the 3D shape. An accurate geometry and an accurate depiction of the appearance of an object is achieved automatically. In summary it is a new approach to high quality 3D object reconstruction. Starting from a sequence of colour images, an algorithm is able to reconstruct both the 3D geometry and the texture.

Highly accurate 3D modelling is very much in demand for:

- digital archiving of objects particularly items from museum collections

- face acquisition which is an important area for the movie and computer games industries

- Internet shopping, where low resolution 3D models are required to sell products successfully online.

The software was recently used to build a 3D model of a Henry Moore sculpture so that it can be viewed by potential buyers from around the world before its auction in London later this year.

Step 1: Image acquisition

Step 2: Camera calibration

Step 3: 3D reconstruction

Step 4: Texture mapping

Antony Gormley sculpture